Vertically-integrated stack

Full ownership across all layers - for predictable cost, performance, and reliability

In-house AI Lab

Turning AI research into customer wins & platform capabilities.

AI developer ecosystem

Interfaces for provisioning and managing platform resources.

Inference platform

Platform layer for running and scaling AI workloads.

Cloud compute

Virtualized resources for user-managed environments.

Core infrastructure

Compute, storage, and networking powering platform operations.

Data center foundation

End-to-end control for predictable cost, performance, and reliability.

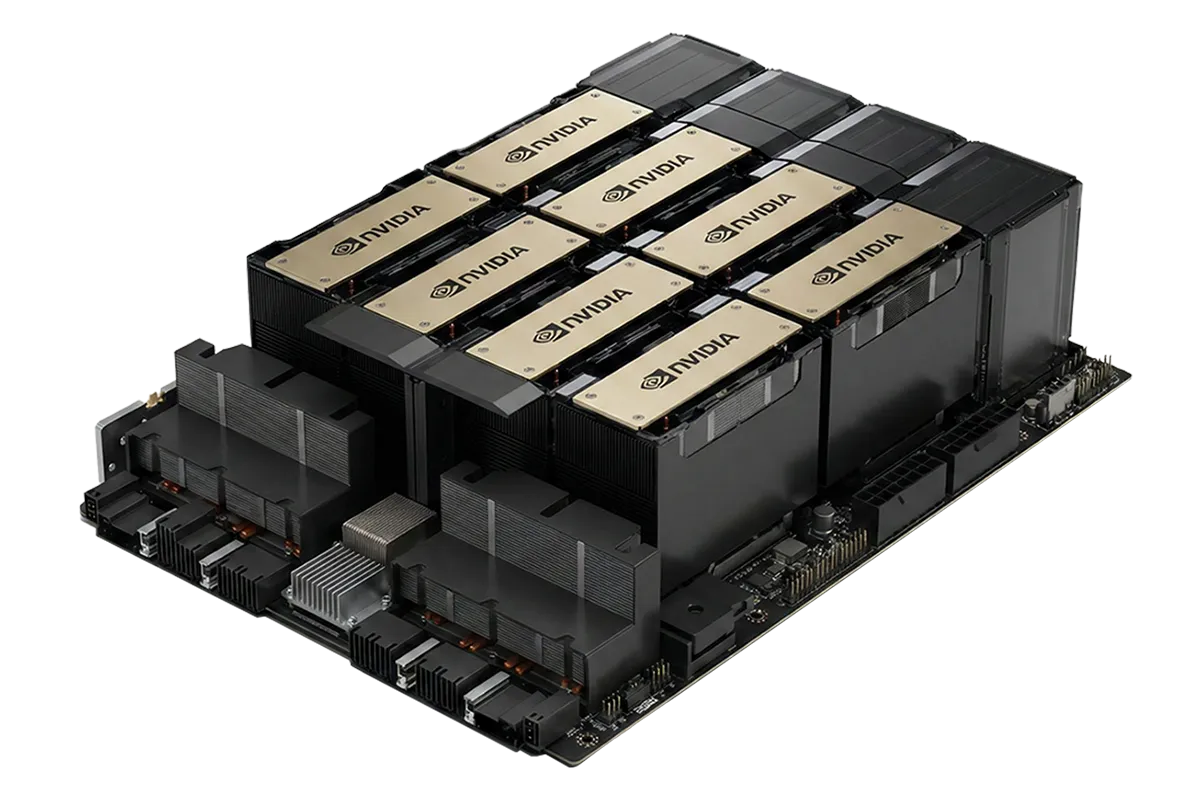

NVIDIA® Preferred Partner

Verda advances its role in the NVIDIA Partner Network (NPN), earning a Preferred partner status.

This achievement signifies Verda's continuous excellence in delivering NVIDIA technologies, including some of the earliest deployments of Blackwell Ultra platforms in Europe - namely NVIDIA GB300 NVL72 and NVIDIA HGX™ B300.

Powering the entire AI model lifecycle - at any scale

The Verda Cloud Platform

Powering the entire AI model lifecycle - at any scale

The Verda Cloud Platform

-

GPU Instances On-demand virtual machines powered by NVIDIA GPUs with PAYG pricing

GPU Instances On-demand virtual machines powered by NVIDIA GPUs with PAYG pricing -

Instant Clusters Self-service access to 16x-128x GPUs with InfiniBand interconnect

Instant Clusters Self-service access to 16x-128x GPUs with InfiniBand interconnect -

Bare-metal Clusters Custom-built GPU clusters tailored to your specifications and serviced by our experts

Bare-metal Clusters Custom-built GPU clusters tailored to your specifications and serviced by our experts -

Serverless Containers Auto-scaling endpoints for containerized models with pay-per-request pricing

Serverless Containers Auto-scaling endpoints for containerized models with pay-per-request pricing -

Managed Endpoints Pre-configured API endpoints for cost-efficient inference of SOTA AI models at scale

Managed Endpoints Pre-configured API endpoints for cost-efficient inference of SOTA AI models at scale -

Co-development Custom full-stack AI solutions for your use case built and maintained by our experts

Co-development Custom full-stack AI solutions for your use case built and maintained by our experts

Success story: Case study: ExpressVPN

ExpressVPN needed a solution to enable sensitive AI workloads to run securely for industry-first secure LLM product without compromising on performance or ability to scale.

They partnered with Verda to develop and test a Confidential Computing to build a scalable secure enclave on then the latest Blackwell architecture.

Software: Collaborated on enabling and optimizing Confidential Compute on latest NVIDIA hardware

Hardware: Enabled ExpressVPN to access NVIDIA B200 accelerator, as well as other accelerators using Blackwell and Hopper architecture with effective scaling

Industry first at scale

Immediate access to latest hardware

Hands-on support and collaboration

Powering AI innovators

Customer spotlights

-

Having direct contact between our engineering teams enables us to move incredibly fast. Being able to deploy any model at scale is exactly what we need in this fast moving industry. Verda enables us to deploy custom models quickly and effortlessly.

Iván de Prado Head of AI -

Our entire language model journey is powered by Verda's clusters, from deployment to training. Their servers and storage smooth operations and maximum uptime, so we can focus on achieving exceptional results without worrying about hardware issues.

José Pombal AI Research Scientist -

Verda powers our entire monitoring and security infrastructure with exceptional reliability. We also enforce firewall restrictions to protect against unauthorized access to our training clusters. Thanks to Verda, our infrastructure runs smoothly and securely.

Nicola Sosio ML Engineer -

Verda is the perfect mix of being nimble and having production-grade reliability for low-latency service like ours. Our startup times and compute costs both dropped significantly. With Verda, we can promise our customers high uptimes and competitive SLAs.

Lars Vågnes Founder & CEO

-

Having direct contact between our engineering teams enables us to move incredibly fast. Being able to deploy any model at scale is exactly what we need in this fast moving industry. Verda enables us to deploy custom models quickly and effortlessly.

Iván de Prado Head of AI -

Our entire language model journey is powered by Verda's clusters, from deployment to training. Their servers and storage smooth operations and maximum uptime, so we can focus on achieving exceptional results without worrying about hardware issues.

José Pombal AI Research Scientist -

Verda powers our entire monitoring and security infrastructure with exceptional reliability. We also enforce firewall restrictions to protect against unauthorized access to our training clusters. Thanks to Verda, our infrastructure runs smoothly and securely.

Nicola Sosio ML Engineer -

Verda is the perfect mix of being nimble and having production-grade reliability for low-latency service like ours. Our startup times and compute costs both dropped significantly. With Verda, we can promise our customers high uptimes and competitive SLAs.

Lars Vågnes Founder & CEO

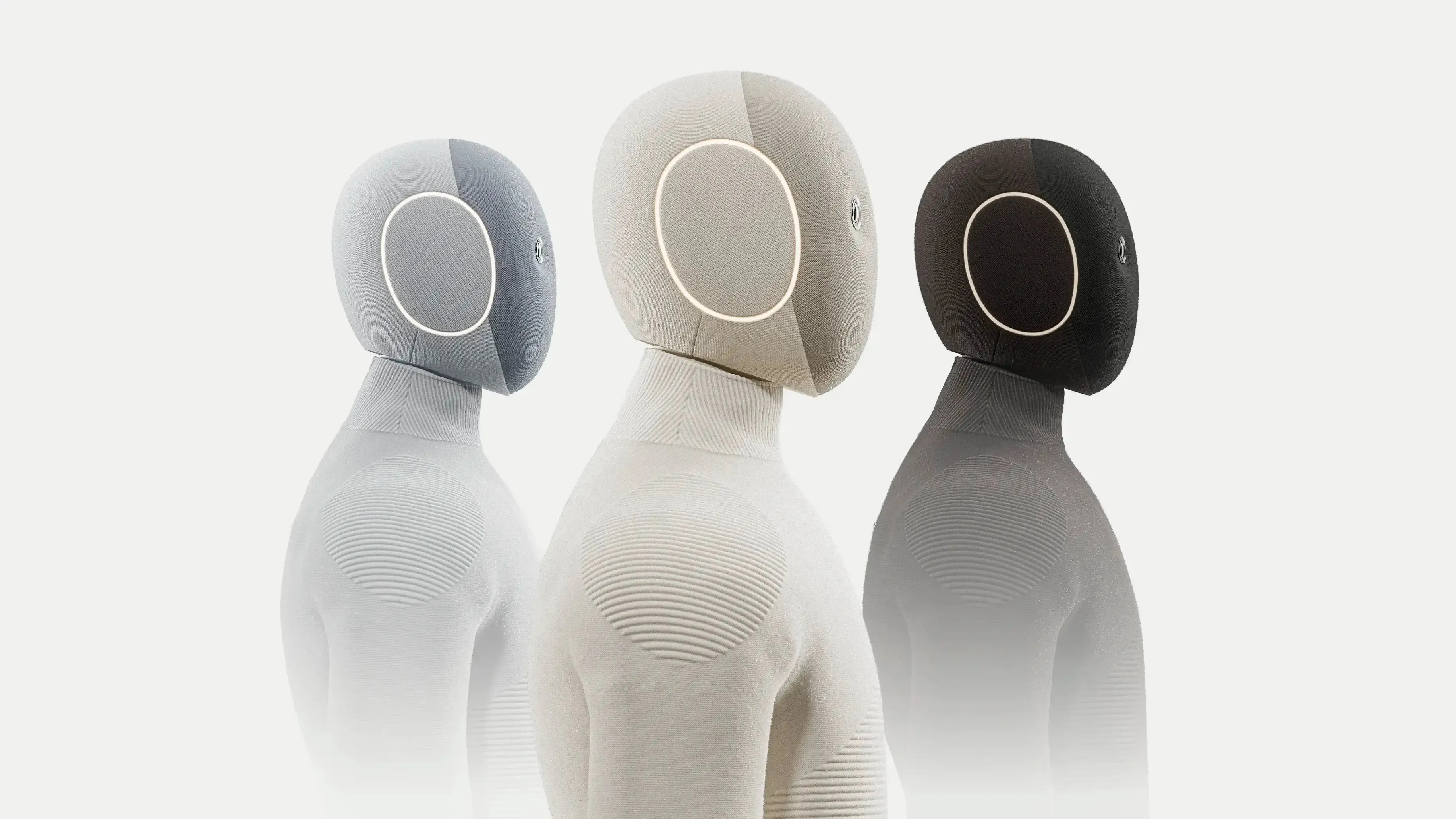

In-house AI Lab

Turning frontier research into customer wins and platform capabilities

-

1X World Model Verda collaborates with 1X on building multi-GPU inference for the 1XWM generative video model.As video quality leads to task success, it enables solving more complex tasks in household autonomy.

1X World Model Verda collaborates with 1X on building multi-GPU inference for the 1XWM generative video model.As video quality leads to task success, it enables solving more complex tasks in household autonomy. -

The SGLang Project Verda sponsors SGLang and its collaborators with access to compute resources and infrastructure support.SGLang’s recent explorations into RL training with FP8 and INT4, utilize NVIDIA Hopper, Blackwell, and Blackwell Ultra platforms.

The SGLang Project Verda sponsors SGLang and its collaborators with access to compute resources and infrastructure support.SGLang’s recent explorations into RL training with FP8 and INT4, utilize NVIDIA Hopper, Blackwell, and Blackwell Ultra platforms. -

1X World Model Challenge Consisting of Verda's in-house engineers, the Revontuli team won both tracks: sampling and compression.For this challenge, the team utilized Verda's Instant Clusters with Blackwell GPUs and InfiniBand interconnect.

1X World Model Challenge Consisting of Verda's in-house engineers, the Revontuli team won both tracks: sampling and compression.For this challenge, the team utilized Verda's Instant Clusters with Blackwell GPUs and InfiniBand interconnect.

The full-stack AI cloud of tomorrow

Verda at a glance

-

Full-stack AI

Flexible architecture for efficient experimentation, training, and inference at any scale. -

Efficient

Cutting-edge hardware with compute, storage, and networking optimized for peak efficiency. -

Developer-first

Web console, developer docs, API, native SDK, Terraform, and more. -

Reliable

Historical uptime of over 99.9% with fair compensation for service disruptions. -

Expert support

Proactive support from our experienced team of ML craftsmen and infrastructure experts. -

AI R&D

In-house expertise from contributing to frontier research and open-source projects. -

Cost-effective

Streamlined GPU access at up to 90% lower costs than hyperscalers. Long-term discounts available. -

Secure and sovereign

European service that complies with GDPR and adheres to SOC2 type II, ISO 27001, 27017, 27018, and 27701. -

Sustainable

Hosted in efficient Nordic data centers that utilize 100% renewable energy sources.